There's one redesign that could change everything about digital politics

Plus: Is it ethical to put words in someone’s mouth with A.I. if they wrote them?

Social media companies were handed a major loss Wednesday after a California jury found Meta and Google negligent for designing their platforms to be harmful for kids. Do democracy next.

This week’s verdict was reached following a novel line of attack from the plaintiff. Online platforms have long been shielded by Section 230, federal law that protects sites and apps from being liable for third-party content, but in this case, lawyers argued it’s really a design problem. Companies engineer their apps to be purposefully addictive for young users with features like “infinite scroll,” “autoplay,” and “filters,” attorneys for the plaintiff argued, and the jury agreed.

“These companies built machines designed to addict the brains of children, and they did it on purpose,” attorney Mark Lanier said during the case. “When you’re making money off kids, you have to do it responsibly.” The same might be said of making money off our politics.

While the landmark case decided this week was about a young woman and thus focused on predatory design features that especially target girls, like Instagram’s beauty filters, consider how social media’s dark design patterns affect our most hyper-partisan posters, podcasters, and politicians. Social media might not be giving them body dysmorphia, but it’s warping their worldviews and incentives in other ways.

Designed for engagement, optimized for rage

Social media is designed for engagement. The longer you stay on, the more ads apps can serve you, and as evidence in the case that they’re optimized to keep you on longer, Lanier showed the court one 2015 email from Meta CEO Mark Zuckerberg calling for a 12% increase in time spent on the app. When it comes to politics, one surefire way to boost time in-app is anger and rage.

The engagement-based algorithm for Twitter, now known as X, amplifies posts that are emotionally charged and hostile to out-partisans, one study published in 2024 found. On Facebook, the app shaped the way elected officials lead their countries: Facebook whistleblower Frances Haugen said in 2021 that an internal company report found after an algorithm change, European political parties “feel strongly that the change to the algorithm has forced them to skew negative in their communications on Facebook… leading them into more extreme policy positions.”

The precedent set by the California case now looms over thousands of other suits challenging social media companies because of design choices they say harm young people. It also puts social network UX and UI under greater scrutiny.

There’s a growing sense in the U.S. that social media is bad. The percentage of U.S. teens who view it as mostly negative grew from 32% to 48% from 2022 to 2024, according to Pew Research Center, and Pew also found a 64% majority of U.S. adults view social media as a bad thing, higher than in any other country polled. Maybe a redesign could help.

For now, social media companies seem designed to suit the needs of their owners. Whether that’s nudging the ideological alignment of the algorithm in a certain direction or increasing engagement time even if it corrodes the national discourse, scrolling and posting today can feel more designed to benefit billionaires like Elon Musk (X), Zuckerberg (Meta), and President Donald Trump (Truth Social) than provide a positive user experience that strengthens our community, nation, and world.

Voters and donors don’t reward lawmakers for optimizing their public speech to go more viral online, but X does. Redesigning social media to bridge people together rather than drive them apart could be just the fix we need.

Is it ethical to put words in someone’s mouth with A.I. in a campaign ad if they wrote them?

Political professionals are split.

Political admakers know it’s best to have a clip of their clients’ opponent saying something damaging on camera for an attack ad rather than just written in text. With a clip, viewers can see it, hear it, and read it, which is better for capturing attention on visual channels like television or YouTube. It’s why opposition researchers have used “trackers” that record candidates at events on camera, and it’s why more recently, some admakers have turned to artificial intelligence.

Is it OK to put words in someone’s mouth that they wrote but didn’t actually say? Political professionals are divided, according to a new survey conducted this month by the American Association of Political Consultants, or AAPC. The survey of its members found that 48% believe that “realistic audio of candidate’s printed quote” is ethical when disclosed, 40% believe it’s not, and 12% say it depends or they don’t know.

Democratic Texas state Rep. James Talarico is the latest to have his likeness recreated in an A.I.-generated spot. The National Republican Senate Committee, or NRSC, released an A.I.-generated video earlier this month depicting Talarico saying some of his most woke takes that previously were only posted to X. Though the video is labeled A.I. at the beginning, for the majority of the clip, a small label is barely visible in the corner, and there’s also some added commentary that the A.I.-generated Talarico says that the real one didn’t write when he praises his own tweets. Some liberties were taken.

Texas state law bans A.I.-generated “deepfake” political ads within 30 days of an election, and the Talarico video inspired some pushback. “These deepfakes are dangerous and wrong. We need protections not just for politics, but for all Americans that could be targeted,” Sen. Andy Kim (D-N.J) wrote on X. Could this be the direction political advertising takes? Potentially. But there’s also evidence political professionals and voters have concerns.

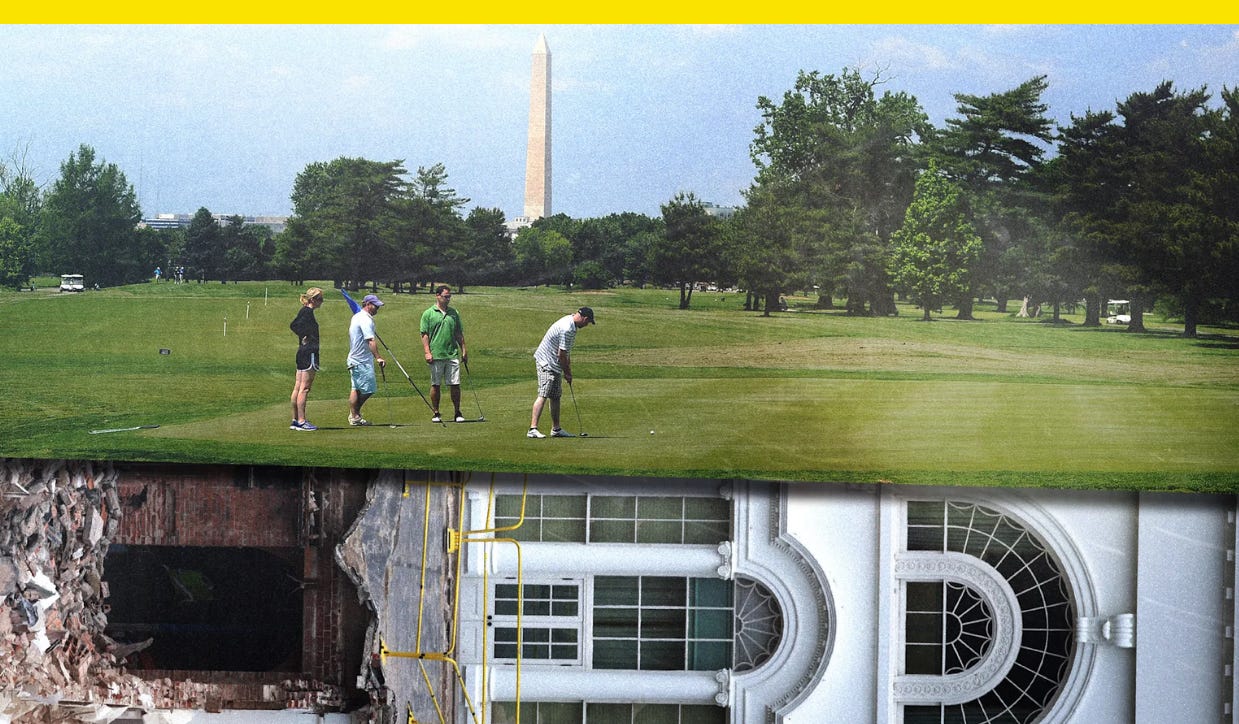

Trump’s plans for a Washington, D.C., golf course built on East Wing rubble

Trump’s burying the East Wing at the site of a municipal golf course he wants to redesign.